The MLOps Architect’s Guide to Building AI Factories for Scale

From Concept to Production: The mlops Architecture Blueprint

Transitioning a promising machine learning model into a reliable, scalable service demands a robust architectural blueprint. This MLOps architecture orchestrates the entire lifecycle, ensuring that machine learning and AI services evolve from experiments into production-grade assets. The core principle is to treat ML models as software artifacts, applying rigorous CI/CD practices to data, code, and models. A well-designed blueprint enables data scientists to experiment freely while giving engineers a stable deployment pathway—a balance often achieved with guidance from experienced machine learning consultants.

The foundational layer is data and feature management. Here, raw data is ingested, validated, and transformed into reusable features. A feature store is critical, acting as a centralized repository. For example, a customer churn prediction model might use a feature like avg_session_length_last_7d. The feature store serves a consistent version, preventing each team from recalculating it independently.

- Step 1: Define and Compute Features. Using a framework like Feast:

# Define the feature view

from feast import FeatureView, Field

from feast.types import Float32

from datetime import timedelta

driver_hourly_stats_view = FeatureView(

name="customer_session_stats",

entities=["customer_id"],

ttl=timedelta(days=7),

schema=[Field(name="avg_session_length_last_7d", dtype=Float32)],

online=True,

source=your_transformation_job_output

)

- Step 2: Materialize features to the online store for low-latency serving:

feast materialize-incremental $(date +%Y-%m-%d) - Measurable Benefit: This architecture eliminates training-serving skew and can reduce feature development time by up to 70%, a key efficiency gain highlighted by any expert machine learning consultancy.

The next pillar is automated model training and validation, where CI/CD for ML begins. Code, data, and model pipelines are versioned using tools like DVC and Git. A pipeline triggers on new data or code commits. The model is trained, evaluated against a champion model on a hold-out set, and packaged if it passes defined metrics (e.g., AUC > 0.85).

- A CI pipeline (e.g., GitHub Actions) runs on a merge to the main branch.

- It executes a training script that outputs a serialized model (e.g.,

model.joblib) and performance metrics. - The pipeline validates the model against business and performance thresholds.

- Upon validation, the model artifact is registered in a model registry (like MLflow Model Registry) with a new version.

Finally, deployment and monitoring ensure the model delivers sustained value in production. The approved model is deployed as a containerized microservice, often using Kubernetes for scaling. Canary deployments mitigate risk. Crucially, the system monitors for model drift in prediction distributions and data quality, triggering retraining pipelines automatically. This closed-loop automation transforms isolated projects into a true factory for machine learning and AI services, enabling rapid iteration and consistent ROI at scale.

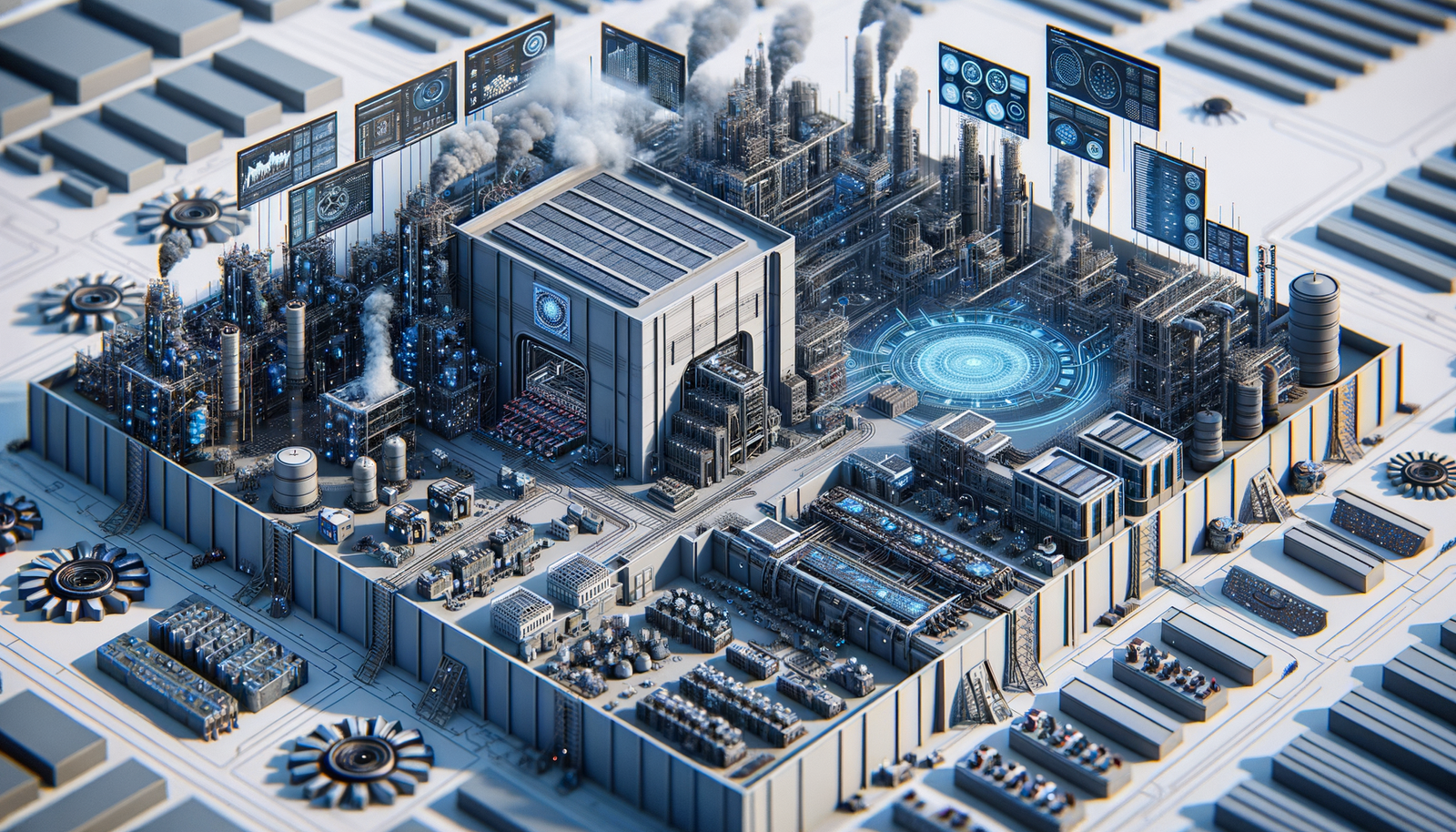

Defining the AI Factory: A Systems View of mlops

An AI factory is a cohesive, automated system designed for the continuous and reliable production of machine learning models. It operationalizes MLOps at an industrial scale, treating development and deployment as a high-velocity assembly line. This systems view integrates people, processes, and technology to transform raw data into dependable predictions with the same rigor as traditional software.

The architecture comprises interconnected pipelines. The data pipeline ingests, validates, and processes raw data into versioned features. The model pipeline automates training, evaluation, and versioning. The serving pipeline handles deployment, monitoring, and rollback. A feature store is central, ensuring consistency between training and serving while enabling reuse.

Consider automating model retraining with Apache Airflow:

- Data Extraction & Validation: A task pulls new transaction data and runs quality checks.

# Example data validation with Pandas

def validate_data(df):

assert df['amount'].isnull().sum() == 0, "Null values found in 'amount'"

assert (df['amount'] >= 0).all(), "Negative transaction amounts"

return df

- Feature Engineering: Validated data is transformed (e.g., into

rolling_spend_7d) and written to the feature store. - Model Training & Evaluation: A new model trains on the latest features. Its performance is compared against the current champion in a staging environment.

- Conditional Deployment: If the new model outperforms the champion by a defined threshold (e.g., 2% higher AUC-ROC), it is automatically deployed via a canary release.

The measurable benefits are substantial: reducing model update cycles from weeks to days, increasing reliability via automated testing, and enforcing governance. This systematic foundation is essential for scaling machine learning and ai services across an enterprise.

Implementing such a system often requires specialized expertise. Engaging machine learning consultants or a machine learning consultancy proves invaluable. They provide the architectural blueprint, help select and integrate tools (e.g., MLflow, Kubeflow), and establish the CI/CD practices that unify the factory. Their external perspective ensures the system is built for scale and agility, avoiding costly debt. The goal is a self-service platform where data scientists can safely experiment and deploy, while IT maintains control over security, cost, and stability.

Core MLOps Principles for Scalable Architecture

Building a production-grade AI factory requires embedding foundational principles into the system’s DNA. These principles transform ad-hoc projects into reliable, scalable machine learning and ai services.

The first principle is automated CI/CD for ML. ML systems require validation of both code and data. A robust pipeline automates testing, training, and deployment. For example, a pipeline triggered by a new commit or data drift might run:

- Data Validation: Use a library like Great Expectations to assert schema and statistical properties.

import great_expectations as ge

suite = ge.dataset.PandasDataset(train_df)

suite.expect_column_values_to_be_between("feature_x", min_value=0, max_value=100)

suite.save_expectation_suite("data_validation_suite.json")

- Model Training & Evaluation: Train the model and compare performance against a champion model.

- Model Packaging: Containerize the model, its dependencies, and a REST server using Docker.

- Staged Deployment: Deploy the container as a shadow endpoint to compare live traffic performance before full promotion.

The measurable benefit is reducing manual release cycles from weeks to hours, ensuring faster iteration.

The second core principle is reproducibility and versioning. Every artifact must be immutable and traceable. Version datasets, model binaries, and environment configurations. Tools like DVC for data and MLflow for models are essential. Logging an experiment with MLflow ensures any model can be recreated:

import mlflow

mlflow.set_experiment("customer_churn")

with mlflow.start_run():

mlflow.log_param("learning_rate", 0.01)

mlflow.log_metric("accuracy", 0.92)

mlflow.sklearn.log_model(model, "model")

This practice is critical for auditability, debugging, and rollback.

Third, implement continuous monitoring and observability. Monitor predictive performance, data drift, and infrastructure health. Key metrics include prediction latency, throughput, and business KPIs. Automated alerts on statistical drift enable proactive retraining, preventing silent degradation. The benefit is sustained model accuracy and reliability, directly impacting ROI.

Finally, architect for modularity and loose coupling. Model training, serving, and monitoring should be distinct, scalable components communicating via well-defined APIs (e.g., model endpoints, event streams). This allows teams to update the feature store independently from the training pipeline. This modular approach is where engaging experienced machine learning consultants proves invaluable. A seasoned machine learning consultancy can design these decoupled systems, avoiding monolithic pitfalls. The result is an architecture where individual machine learning and ai services can be developed, scaled, and maintained independently, creating a resilient AI factory.

Laying the Foundational Infrastructure for MLOps

The journey to a scalable AI factory begins with a robust, automated, and reproducible infrastructure layer. This foundation is a cohesive platform supporting the entire ML lifecycle. The core principle is infrastructure as code (IaC), ensuring your environment is versioned, consistent, and recreatable. For teams building custom models, this means provisioning scalable compute clusters. For those leveraging cloud machine learning and ai services, IaC automates configuring managed endpoints, feature stores, and training pipelines.

A critical first step is establishing a version control system for all assets—application code, data schemas, model definitions, and infrastructure scripts. Consider this project structure:

infra/– Terraform or CloudFormation scripts.src/– Training and inference code.pipelines/– Orchestration definitions (e.g., Kubeflow Pipelines).models/– Model artifacts and metadata.data/– Schemas and versioned dataset references.

Next, automate environment setup. Using Terraform, you can define a scalable compute cluster. This snippet provisions a managed Kubernetes cluster on Google Cloud:

resource "google_container_cluster" "mlops_cluster" {

name = "ml-training-cluster"

location = "us-central1"

initial_node_count = 3

node_config {

machine_type = "n1-standard-4"

oauth_scopes = [

"https://www.googleapis.com/auth/cloud-platform"

]

}

}

The measurable benefit is reducing environment setup from days to minutes, eliminating „works on my machine” issues. This technical orchestration is often where machine learning consultants provide immense value, designing blueprints for optimal cost and performance.

With compute in place, focus on data and model artifact management. Implement a centralized model registry (like MLflow Model Registry) and a feature store. This creates a single source of truth for model versions and serving-ready data. The workflow becomes:

1. A data scientist commits a new model version to the registry.

2. The CI/CD pipeline automatically deploys it to a staging endpoint.

3. Automated tests validate performance against a baseline.

4. Upon approval, the model is promoted to production.

This pipeline automation is the heartbeat of the AI factory, turning experimental code into reliable services. For organizations without deep in-house expertise, partnering with a machine learning consultancy can fast-track this phase, providing battle-tested templates. The outcome is a foundation where experimentation is safe, deployment is routine, and scaling is a matter of configuration.

Building a Scalable Compute and Data Platform

A scalable compute and data platform is the foundational engine of an AI factory, handling fluctuating workloads and growing data volumes. The core principle is to separate compute from storage, allowing each to scale independently. For storage, use cloud object stores like Amazon S3 as your single source of truth. Compute resources, like Kubernetes clusters, can then spin up on-demand to process this data, scaling to zero when idle to control costs.

The data pipeline must be robust and automated. Consider this Apache Airflow DAG snippet for orchestrating a daily feature engineering job:

from airflow import DAG

from airflow.providers.amazon.aws.operators.emr import EmrAddStepsOperator

from datetime import datetime

default_args = {'owner': 'data_team', 'start_date': datetime(2023, 1, 1)}

with DAG('daily_feature_pipeline', schedule_interval='@daily', default_args=default_args) as dag:

spark_step = {

'Name': 'CalculateFeatures',

'ActionOnFailure': 'CONTINUE',

'HadoopJarStep': {

'Jar': 'command-runner.jar',

'Args': ['spark-submit', 's3://code-bucket/feature_engineering.py']

}

}

process_task = EmrAddStepsOperator(

task_id='run_spark_job',

job_flow_id='j-XXXXXXXXXXXXX',

steps=[spark_step]

)

This approach provides measurable benefits: pipeline reliability through orchestration, reproducibility via code, and easy data backfilling.

For model training at scale, implement a job queueing system to manage GPU clusters. Tools like Kubernetes with Kubeflow allow you to submit training jobs scheduled on resource availability. Engaging machine learning consultants can help architect the right mix of spot and on-demand instances to slash training costs by 60-70% without sacrificing reliability.

Key architectural components include:

* Infrastructure as Code (IaC): Use Terraform to version and provision all resources.

* Data Versioning: Integrate tools like DVC or Delta Lake for full lineage tracking.

* Unified IAM: Centralize permissions across storage, compute, and machine learning and ai services.

* Monitoring and Observability: Instrument pipelines with metrics and logs aggregated to a central dashboard.

The measurable outcome is a reduction in time-to-insight and time-to-model. Data scientists spend less time on infrastructure and more time iterating. A successful implementation, often guided by a machine learning consultancy, transforms the platform from a bottleneck into a strategic asset.

Implementing MLOps Toolchains for CI/CD and Automation

A robust MLOps toolchain automates the lifecycle of machine learning and AI services. The core objective is a Continuous Integration and Continuous Delivery (CI/CD) pipeline designed for ML, managing code, data, and model artifacts cohesively.

The foundational step is Version Control for Everything. This includes dataset versioning (with DVC), model registry entries, and environment configurations. A git commit can trigger the pipeline:

- Continuous Integration (CI): The pipeline runs unit tests, builds a containerized environment, and executes integration tests. A GitHub Actions workflow snippet:

- name: Train Model

run: |

python train.py --data-version ${{ inputs.data_version }}

- name: Run Model Tests

run: |

pytest tests/model_validation.py

- Continuous Delivery (CD): Upon successful testing, the pipeline packages the model into a Docker container, pushes it to a registry, and updates a model registry with the new version.

- Automated Deployment: The toolchain promotes the staged model to pre-production for validation (like A/B testing) and finally to production. Measurable benefits include reducing manual deployment errors by over 70% and shortening deployment cycles from days to minutes.

Key toolchain components are an orchestrator (e.g., Argo Workflows), a model registry, and monitoring tools. Integrating data quality gates into the CI process is critical for data engineers.

Engaging machine learning consultants accelerates this implementation, especially in integrating diverse tools. A proficient machine learning consultancy will design the pipeline and establish the feature store—a central repository for consistent features—vital for reducing training-serving skew. The final architecture enables true automation: a drop in data quality can automatically trigger alerts, retraining, and a new candidate model for review.

Orchestrating the End-to-End MLOps Lifecycle

Orchestrating the journey from model prototype to production asset is the core of a scalable AI factory. This lifecycle integrates machine learning and AI services into a repeatable process. It begins with data versioning and validation. Tools like DVC track datasets alongside code. Validate incoming data against a schema to catch drift early.

- Example Validation with Pandera:

import pandas as pd

import pandera as pa

from pandera import Column, Check

schema = pa.DataFrameSchema({

"feature_a": Column(float, checks=Check.in_range(0, 100)),

"feature_b": Column(int, nullable=False),

})

validated_df = schema.validate(df)

*Measurable Benefit*: Catches data quality issues that could degrade model performance by 20%+ before costly training runs.

Next, continuous training (CT) automates model retraining. Collaboration with machine learning consultants is valuable here to design the triggering logic—be it schedule, data drift, or performance decay. A CI/CD pipeline orchestrates this:

- Trigger: A scheduled job or a push to

maininitiates the pipeline. - Build & Test: The environment builds, and unit tests execute.

- Train & Evaluate: The model trains on the latest validated data. Performance is evaluated against a test set and a champion model in staging.

- Register & Deploy: If the new model outperforms the baseline, it is versioned in a model registry and promoted.

The final phase is continuous monitoring. Monitor for concept drift and data drift. Implementing a robust dashboard is a key deliverable from a machine learning consultancy.

- Key Metrics: Prediction latency, throughput, error rates, and drift statistics (e.g., Population Stability Index).

- Automated Response: Set alerts for metric thresholds. For significant drift, configure the pipeline to trigger retraining or rollback.

Actionable Insight: Start by automating model deployment (CD) before full continuous training. Use a canary deployment, routing 5% of traffic to the new model to compare live performance. This de-risks updates. End-to-end orchestration of these stages transforms isolated experiments into a governed, scalable pipeline.

Designing a Reproducible Model Training Pipeline

A reproducible model training pipeline is the core engine of an AI factory, turning ad-hoc experimentation into a reliable, automated process. It ensures every model iteration can be recreated, audited, and compared. This systematic approach is a primary deliverable when engaging machine learning consultants.

The foundation is containerization. Package code, dependencies, and system tools into a Docker image for an immutable runtime environment. This is essential for leveraging scalable machine learning and ai services.

FROM python:3.9-slim

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

COPY src/ /app/src

WORKDIR /app

Next, implement version control for everything: the Docker image, training dataset (via hashes), hyperparameters, and the model binary. Use a metadata store to log artifacts for each run. With MLflow:

import mlflow

mlflow.set_tracking_uri("http://mlflow-server:5000")

with mlflow.start_run():

mlflow.log_param("learning_rate", 0.01)

mlflow.log_artifact("data/train_dataset.parquet")

# ... training logic ...

mlflow.sklearn.log_model(model, "model")

Orchestrate the pipeline using a tool like Apache Airflow:

- Data Validation: Check schema, distributions, and quality.

- Feature Engineering: Apply consistent transformations, saving fitted scalers/encoders as artifacts.

- Model Training: Execute the training script within the container.

- Model Evaluation: Score on a held-out validation set, capturing key metrics.

- Model Registration: If metrics pass a threshold, register the new version to a model registry.

The measurable benefits are substantial: reducing deployment time from weeks to hours, eliminating configuration drift, and attributing performance changes to specific modifications. This operational maturity is the hallmark of a professional machine learning consultancy, enabling teams to reliably support multiple projects.

Managing the MLOps Deployment and Monitoring Loop

The production AI system is governed by a continuous, automated loop integrating machine learning and AI services into IT operations. A robust deployment strategy starts with a model registry, a versioned repository for trained artifacts. Using MLflow:

* Log the model: mlflow.sklearn.log_model(sk_model=model, artifact_path="sales_forecast")

* Promote to staging via the registry UI or API.

* Deploy via CI/CD: A pipeline trigger, like a new model version in „Staging,” initiates a deployment job that packages the model into a container and deploys it to Kubernetes or a managed endpoint.

The measurable benefit is consistency and rollback capability. Engaging machine learning consultants can help architect these resilient pipelines from the outset.

Once deployed, continuous monitoring is critical. Track system health (latency, throughput) and ML-specific metrics like data drift and concept drift.

- Instrument your serving component to log prediction requests and, when available, ground truth.

- Schedule a daily job to compare incoming feature data distributions against the training baseline using a metric like Population Stability Index (PSI).

- Set alerts for when drift metrics exceed a threshold (e.g., PSI > 0.2).

A simple drift check in Python:

from scipy import stats

training_dist = features_training['value']

live_dist = features_live['value']

drift_statistic, p_value = stats.ks_2samp(training_dist, live_dist)

if p_value < 0.01:

trigger_alert("Significant data drift detected in feature 'value'")

Monitoring creates a feedback loop. An alert on performance decay should automatically trigger a retraining pipeline, evaluation, and conditional promotion, turning a static model into a self-improving asset. A machine learning consultancy excels at designing these automated governance workflows, ensuring the loop is maintainable. The ultimate benefit is sustained model accuracy and business impact.

Conclusion: Operationalizing the AI Factory Vision

Operationalizing the AI Factory vision transforms isolated experiments into a scalable, reliable engine for value creation. It establishes a production line for machine learning and ai services where models are versioned assets with automated CI/CD.

A critical step is implementing a unified feature store, decoupling feature engineering from model development to ensure consistency.

from feast import FeatureStore, Entity, FeatureView, Field

from feast.types import Float32

from datetime import timedelta

# Define entity and feature views

driver = Entity(name="driver", join_keys=["driver_id"])

driver_stats_fv = FeatureView(

name="driver_hourly_stats",

entities=[driver],

ttl=timedelta(hours=2),

schema=[Field(name="avg_trip_length", dtype=Float32)],

source=BigQuerySource(table="feast.drivers")

)

# Apply definitions

store = FeatureStore(repo_path=".")

store.apply([driver, driver_stats_fv])

This creates a reusable, real-time feature source for reproducible performance.

The deployment strategy must be robust. Canary deployments, managed through a machine learning consultancy-grade CI/CD pipeline, mitigate risk. A pipeline step might use MLflow to promote a model only after it passes automated validation. Measurable benefits include a >70% reduction in failed deployments and rollback capability in seconds.

Sustaining the factory requires monitoring and governance:

1. Data Drift Detection: Statistical tests on feature distributions.

2. Model Performance Decay: Tracking accuracy/AUC, triggering retraining.

3. Explainability and Fairness: Integrating SHAP values into logs for bias auditing.

Engaging expert machine learning consultants is pivotal to establish these advanced facets, ensuring outputs are scalable, responsible, and compliant.

Ultimately, the operational AI Factory is a socio-technical system. It empowers data scientists with self-service tools while enforcing governance through code. It delivers ROI through accelerated time-to-market, reduced overhead, and systematic scaling of AI. The architect curates this ecosystem, ensuring seamless integration of machine learning and ai services into the enterprise IT landscape.

Measuring Success: Key MLOps Metrics and KPIs

To ensure your AI factory delivers value, track operational and business metrics beyond model accuracy. Effective measurement bridges experimental machine learning and AI services and reliable production. Monitor across four pillars: Model Performance, System Health, Process Efficiency, and Business Impact.

Track Model Performance to detect decay. Monitor prediction drift, concept drift, and data quality. Implement automated checks with Evidently AI. For example, to calculate prediction drift:

from evidently.metrics import DataDriftTable

from evidently.report import Report

import pandas as pd

reference_data = pd.read_parquet('reference_data.parquet')

current_data = pd.read_parquet('kafka_stream_snapshot.parquet')

drift_report = Report(metrics=[DataDriftTable()])

drift_report.run(reference_data=reference_data, current_data=current_data)

drift_report.save_html('data_drift_report.html')

If drift exceeds a threshold, trigger retraining. The benefit is maintained model efficacy.

Monitor System Health for reliability:

* Model Latency: P95/P99 inference time.

* Throughput: Predictions per second.

* Service Availability: Uptime percentage.

* Compute Utilization: GPU/CPU and memory usage.

Instrument your endpoint to export metrics to Prometheus. This visibility is critical for scaling and a core deliverable from a machine learning consultancy.

Measure Process Efficiency to accelerate the lifecycle:

* Lead Time for Changes: From commit to production.

* Deployment Frequency.

* Mean Time to Recovery (MTTR).

* Model Retraining Cost.

Optimizing these reduces friction and cost. Automating retraining with Kubeflow can slash lead time from weeks to hours—a key offering from machine learning consultants.

Finally, align with Business Impact. Connect model performance to outcomes like revenue lift, cost savings, or increased user engagement. Establish a feedback loop where business metrics influence model improvement priorities. Tracking this full spectrum transforms AI initiatives into a measurable, valuable factory, ensuring machine learning consultancy efforts deliver sustained ROI.

Future-Proofing Your MLOps Architecture

To keep your AI factory adaptable, design infrastructure around machine learning and AI services as modular components. Start with a containerized execution layer. Package all training and inference code into Docker containers, decoupling logic from the platform. This allows migration between clouds or on-premise hardware.

FROM python:3.9-slim

WORKDIR /app

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

COPY train.py .

CMD ["python", "train.py"]

Orchestrate these containers with platform-agnostic tools like Kubernetes or Kubeflow Pipelines.

Implement unified metadata and artifact tracking. Log every experiment, pipeline run, and deployed model with its code version, data version, parameters, and metrics. Tools like MLflow provide this. Enforcing this creates a single source of truth for reproducibility and audits—a key deliverable when engaging machine learning consultants.

Adopt a feature store to separate data transformation from model development. Data engineers own production-grade feature pipelines, while data scientists access consistent features. This prevents training-serving skew and accelerates new model development.

- Use an open-source feature store like Feast.

driver_stats_fv = FeatureView(

name="driver_activity",

entities=["driver_id"],

ttl=timedelta(days=1),

schema=[Field(name="avg_daily_trips", dtype=Float32)],

source=BigQuerySource(table="feast.drivers")

)

- Models request features by name, ensuring consistency.

Adopt a polyglot and multi-framework strategy. Support models from TensorFlow, PyTorch, and Scikit-learn. Standardize on interchange formats like ONNX for inference optimization. This prevents vendor lock-in. A comprehensive machine learning consultancy will stress this point, protecting investments against rapid AI evolution.

Finally, design for cost observability and optimization. Implement tagging for all compute resources and establish chargeback mechanisms. Use spot instances for fault-tolerant training and auto-scaling inference endpoints. The measurable benefit is direct control over operational expenditure, turning a cost center into an efficient profit driver. Building on these principles ensures your architecture scales and evolves gracefully.

Summary

This guide outlines the architectural blueprint for building scalable AI factories through robust MLOps practices. It details how to orchestrate the entire lifecycle of machine learning and AI services, from data management and automated training to deployment and continuous monitoring. Engaging experienced machine learning consultants or a specialized machine learning consultancy is emphasized as a critical success factor for designing modular, reproducible systems and implementing efficient CI/CD toolchains. By adopting these principles, organizations can transform ad-hoc projects into a production-grade factory, ensuring reliable, scalable, and valuable AI delivery.